For two years, enterprise AI pilots shared the same confession: “Our agents can reason brilliantly, but we can’t actually let them touch production systems.” The missing layer was not intelligence — it was safe, isolated execution. On April 15, 2026, OpenAI closed that gap with a significant update to its Agents SDK, introducing native sandbox execution that gives AI agents a controlled workspace to read and write files, install dependencies, run code, and use tools — all without touching the broader enterprise environment. The move signals that the agentic AI moment is no longer a forecast; it is a deployment requirement.

The core problem the update solves is architectural. Agents that operate on real enterprise tasks — drafting legal briefs, writing and executing data pipelines, debugging production code — need a filesystem, a shell, and the ability to install dependencies. Before this update, developers had to assemble this execution layer themselves, stitching together Docker containers, cloud VMs, or third-party sandboxes with custom credential management. The friction was enough to keep most enterprise deployments stuck at proof-of-concept. OpenAI’s SDK now ships with that entire layer built in, with standardized integrations to seven sandbox providers — Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, and Vercel — and an open adapter interface for custom environments.

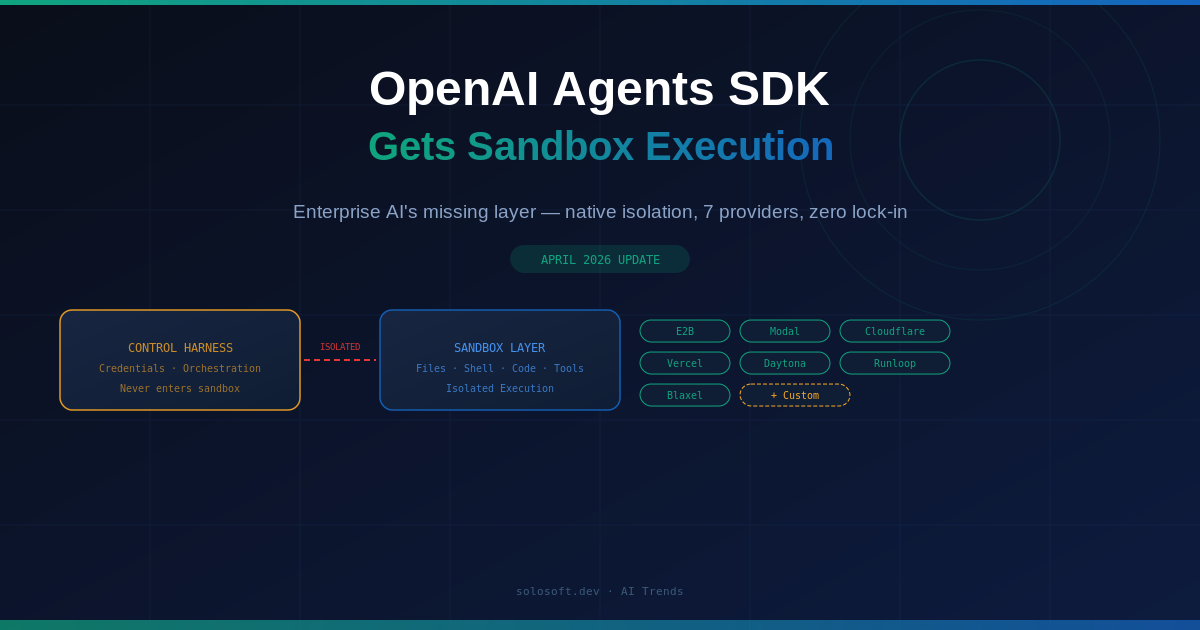

The security architecture is the more consequential innovation. OpenAI has separated the control harness (where credentials live and orchestration decisions are made) from the compute layer (where model-generated code actually runs). This isolation means a compromised or misbehaving agent cannot escalate privileges into the host system. For CISOs at financial services and healthcare firms — the two verticals leading enterprise agentic adoption — this boundary was a prerequisite before any production approval could be granted.

This is not OpenAI shipping a shiny demo. It is shipping the plumbing that enterprise risk and compliance teams have been waiting for.

What Is the New Sandboxing Feature in the OpenAI Agents SDK?

The April 2026 update adds a model-native execution harness with configurable memory, sandbox-aware orchestration, and filesystem tools modeled on the Codex workflow. Out of the box, agents can run in sandboxed environments with the files, tools, and compute they need — without developers manually constructing that layer.

Previously, building an agent that could safely manipulate files or run shell commands required assembling custom infrastructure around the SDK. The new harness standardizes this: developers declare a sandbox provider, configure memory and tool access, and the SDK handles the rest. The result is a dramatic reduction in time-to-production for enterprise agentic use cases.

Why Did Enterprise AI Deployments Stall Without Sandbox Execution?

Enterprise AI deployments stalled at the pilot stage because production systems demand controlled, auditable execution — not just capable reasoning. Agents that can only return text cannot automate the file-intensive, code-running workflows that justify AI investment. The sandbox gap forced every enterprise team to reinvent the same dangerous infrastructure.

The pattern was consistent across industries. A legal AI that can draft a contract is useful; one that can also run document comparison scripts, generate redlines, and write back to the DMS is transformative. Without a safe execution layer, agents were essentially amputees — intelligent but unable to act. The new SDK changes this calculus entirely.

| Capability | Before SDK Update | After SDK Update |

|---|---|---|

| File read / write | Manual Docker setup required | Native, out of the box |

| Dependency installation | Custom VM provisioning | Handled by sandbox provider |

| Shell command execution | Dangerous without isolation | Isolated compute layer |

| Credential management | Developer responsibility | Harness-level isolation |

| Multi-provider support | None | 7 providers + custom |

| Security audit trail | Custom logging required | Standardized harness events |

How Does the Security Architecture Protect Enterprise Systems?

OpenAI separates the control harness — where credentials and orchestration logic reside — from the sandbox compute layer where model-generated code runs. This physical separation ensures that credentials never enter the environment where untrusted code executes, making lateral movement from a compromised agent nearly impossible.

This two-layer model mirrors patterns already proven in cloud security: assume the execution environment can be compromised, and design so that a breach there cannot propagate. For enterprises with SOC 2 Type II or ISO 27001 requirements, this architecture provides the isolation boundary needed for compliance sign-off without requiring custom infrastructure investment.

graph TD

A[Enterprise Application] --> B[Control Harness]

B --> C[Credentials and Orchestration]

B --> D[Sandbox Execution Layer]

D --> E[File System Access]

D --> F[Shell and Code Runner]

D --> G[Installed Dependencies]

C -.->|Never enters| D

style C fill:#f9a825,color:#000

style D fill:#1565c0,color:#fffWhich Sandbox Providers Does the SDK Now Support?

The updated SDK ships with standardized integrations for seven providers: Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, and Vercel. Developers can also plug in their own sandbox via an open adapter interface, preserving flexibility for enterprises with existing infrastructure investments.

Each provider covers a different deployment profile. E2B and Modal are favored for data-science and ML workloads. Cloudflare and Vercel serve edge-first, low-latency use cases. Daytona and Runloop target development environment cloning. Blaxel positions for multi-agent orchestration. The diversity of options reflects OpenAI’s strategy of owning the orchestration layer while remaining agnostic at the compute layer.

| Provider | Best For | Deployment Model |

|---|---|---|

| E2B | Data science and ML agent workflows | Cloud microVMs |

| Modal | Serverless GPU and CPU compute | Serverless |

| Cloudflare | Edge-first, low-latency tasks | Edge network |

| Vercel | Frontend-adjacent agent workflows | Serverless edge |

| Daytona | Dev environment cloning | Self-hosted or cloud |

| Runloop | Enterprise dev environment agents | Cloud |

| Blaxel | Multi-agent orchestration | Cloud |

What Is the Broader Impact on the Enterprise Agentic AI Race?

The sandbox update accelerates enterprise agentic adoption by removing the last major technical blocker: safe autonomous execution. With secure isolation now a standard SDK feature rather than a custom engineering project, the differentiator for enterprise AI vendors shifts entirely to reasoning quality, tool integrations, and multi-agent orchestration.

Anthropic, Google, and Microsoft have been competing fiercely on the same enterprise agentic tier. Anthropic’s Claude Managed Agents — launched earlier this quarter — provides built-in memory, permissions, and monitoring. Google’s Vertex AI Agent Builder offers deep GCP integration. OpenAI’s SDK update is a direct counter: standardized execution infrastructure across any cloud provider, not tied to a specific platform. The battle for enterprise agents is now a fight for the orchestration standard.

flowchart LR

A[Prompt and Task Input] --> B[OpenAI Control Harness]

B --> C{Sandbox Provider}

C --> D[E2B]

C --> E[Modal]

C --> F[Cloudflare]

C --> G[Daytona]

C --> H[Vercel]

C --> I[Custom Adapter]

D & E & F & G & H & I --> J[Isolated Code Execution]

J --> K[Result Returned to Harness]

K --> L[Enterprise Application Output]| Platform | Sandbox Approach | Multi-Agent | Cloud Lock-in |

|---|---|---|---|

| OpenAI Agents SDK | 7 providers plus custom | Via subagents | None |

| Anthropic Claude Managed Agents | Proprietary | Yes | Anthropic API |

| Google Vertex AI Agent Builder | GCP Compute | Yes | GCP |

| Microsoft Copilot Studio | Azure Container Apps | Limited | Azure |

FAQ

What is the OpenAI Agents SDK sandbox update?

OpenAI updated its Agents SDK in April 2026 to include native sandbox execution environments. Agents can now read and write files, install dependencies, run code, and use tools inside controlled compute environments — removing the need for developers to stitch together their own isolation layer from scratch.

Which sandbox providers does the new Agents SDK support?

The updated SDK offers built-in integrations with seven providers: Blaxel, Cloudflare, Daytona, E2B, Modal, Runloop, and Vercel. Developers can also bring their own custom sandbox environments if their infrastructure requirements fall outside these options.

How does the SDK separate the control harness from compute?

OpenAI’s security model keeps credentials and orchestration logic entirely in the control harness, physically isolated from the sandbox where model-generated code executes. This means a compromised agent workspace cannot escalate into the broader system — a key requirement for regulated enterprise environments.

Is the new Agents SDK available in TypeScript?

At launch, the sandbox and new harness capabilities are Python-only. TypeScript support is on the roadmap, and OpenAI has stated it intends to bring all new agent capabilities — including code mode and subagents — to both languages.

How is the updated Agents SDK priced?

OpenAI is offering the new sandbox and harness capabilities to all API customers at standard API pricing. There is no separate sandbox tier — costs are metered through the usual token-based billing model.

What does this mean for enterprises in regulated industries?

The control-harness / compute-layer separation provides the isolation boundary that compliance teams at financial services and healthcare firms require. CISOs can now approve production agentic deployments without custom infrastructure investment, as the security perimeter is standardized and auditable by default.